Reasons to delete social media

November 4, 2020

New documentaries like “The Social Dilemma” on Netflix have served to reiterate an open secret, not only among the Silicon Valley elite but also among the general public — that social media is as intoxicating as it is draining and harmful. Many, after watching the documentary and others of its category, followed this common train of thought: “Oh wow, I didn’t even realize that. I should use my phone less,” before promptly proceeding to consume more of that same content. Pick your poison: TikTok, Twitter, Facebook, YouTube, Snapchat and others have those key elements of algorithmic recommendations to draw and retain the most hours of human attention possible.

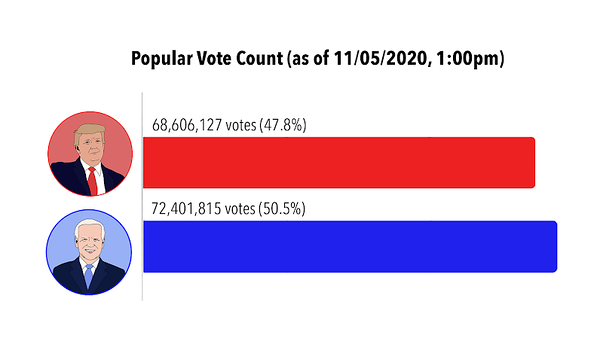

This new problem has proved so pervasive that, in the wake of the documentary’s release, the U.S. Senate Committee on Commerce called prominent tech CEOs to testify about their algorithms. While the hearing inevitably devolved into partisan talking points about the election and alleged “anti-conservative bias,” there was some substantive discussion of the issues, including a U.S. code that protects companies from liability of user content on their platform. Considering the rampant problem of lawmakers’ understanding of the way technology works (re: Google CEO Sundar Pichai’s 2018 testimony), their effectiveness to make substantive changes still presents a real problem. Relying on these companies to decrease political polarization and rampant misinformation themselves is naive at best, because, as Senator Amy Klobuchar quipped, “The way I look at it — more divisiveness, more time on the platform, the company makes more money.”

The worst part about big tech’s accountability against misinformation and addiction is that any viable solution — not just a good solution, but any solution — is not immediately apparent. Should the government hold companies fully accountable for what their users post? Give them unlimited leeway? Put labels on any and all misinformation? All of these present grave issues — especially that last one, because as lecturer Tom Scott remarked, “There is no algorithm for truth.”

After having become increasingly disillusioned with election coverage, I decided to take a pause from the news and social media. From the end of the last presidential debate until the day after the election, I self-isolated from the deluge of algorithmically-recommended content that my phone and laptop serve to me. By no means was this permanent, and I had also already voted by that point. I just wanted to see whether that 12-day break would have some effect on my mental state.

A few days in, I realized just how much I rely on these algorithms for quick hits of dopamine. The most alarming thing was, repeatedly, while working on homework and I hit a moment of confusion or frustration, I would immediately reach for my phone or start typing in the URL of my preferred news site. Even in the last few days of my “experiment,” that oddly-scary impulse was still ingrained in my brain.

I am sure I’m not the only one with that same inclination, as the roots for all sorts of these algorithmic recommendations are buried deep. Quitting TikTok, for instance, proved a challenge after numerous attempts, especially considering I was previously employed to create TikTok videos. And that was just one network: I am still more than plugged into the news, Twitter, Snapchat and Instagram. The fear of missing out and disconnectedness that quitting social media platforms presents, compounded with their purely addicting nature, makes it so difficult to quit.

But, as said previously, addiction and misinformation are open secrets to everyone involved with these platforms, developers and users alike. And while I laud the incredible research and message of “The Social Dilemma,” I fear that it may fall on deaf ears. I’m reminded of an episode of the series Black Mirror, “Fifteen Million Merits,” in which a young man pulls an elaborate stunt to expose the inner workings of his dystopian society on live television. However, instead of viewers being inspired to join in against the society’s unnamed handlers, his message is — you guessed it — packaged in a general-viewing television show. My fear is that the subject and medium of “The Social Dilemma” render action effectively impossible on a large scale. The documentary was genuinely impactful, well-written and researched, expertly produced, but almost inevitably (and incredibly unfortunately) unable to inspire action.

Algorithms that can permanently change everything from our neural connections all the way to our societal ones seem almost too big and scary to control. Nevertheless, we must, in some meaningful way, control them. We need to dedicate people in our government to ensuring that algorithms and AI work in our interests, not against them. We need to ensure that those who represent us know what they’re talking about when it comes to big tech. We, individually, need to try and find some route to monitor the technology we use without devolving into reclusiveness. And finally, we have to make sure that our nation’s brightest minds aren’t solely utilized for maximizing user engagement on social media feeds, but instead are dedicated to creating platforms that encourage healthy cooperation between us humans and our technology.